Vectorising The GLOBE Dataset

Whilst making some maps recently, I found it unwieldy to use one of my previously-generated background images of elevation due to it being 43,200 px × 21,600 px. The maps that I was making did not have a global extent, so it seemed wasteful to use an image which had global extent. Using scaled down versions of the image just made my maps look horribly coarse, but using the full-sized image meant that my laptop started swapping.

I decided to see if I could vectorise the Global Land One-km Base Elevation (GLOBE) project and create GeoJSON files of contours of elevation so that I could have nice, highly resolved, shapes without the overhead of lots of pixels in parts of the world that I did not care about. To this end, I wrote VGD (Vectorise GLOBE Dataset).

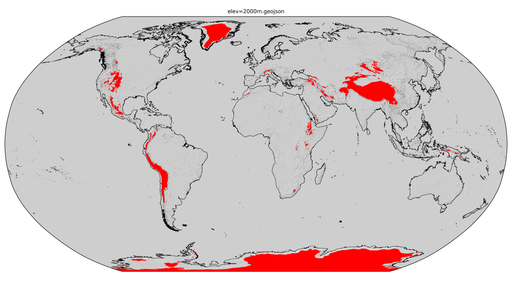

The “nuts and bolts” of the project is a FORTRAN program which walks around the rectilinear dataset and saves the locations of the corners of the pixels which define the edge of a contiguous region above a certain elevation. The program outputs numerous CSV files of these paths (one for each Polygon) and an image to show the user what it has done. An example of such an image is shown below (for an elevation contour of 2,000m using a dataset downscaled to 32km × 32km pixels). A Python script then merges these CSV files together to create a single GeoJSON file (for a single combination of elevation contour and dataset resolution).

The single GeoJSON file corresponding to the above image is shown below (which is 1.1 MiB uncompressed).

checkout the “main” branch).It was quite fun to think of the logic of walking a path around a rectilinear grid (the GitHub repository contains a diagram that I drew to help me figure it out). The program is surprisingly fast too. Unfortunately, merging the CSV files to create the GeoJSON file is quite slow because some of the smaller Polygons are holes in some of the larger Polygons and doing the checks for this is slow when thousands of Polygons have been found. The other hindrance that I had to contend with was that the Shapely user manual says that a [Multi]Polygon can only touch itself once (hence some square corners needed chamfering).